4/21/2026

Mobile App Development Firms: How to Compare Like a CTO

Compare mobile app development firms like a CTO: evaluate evidence, code quality, CI/CD, security, release process, and contracts with a practical scorecard.

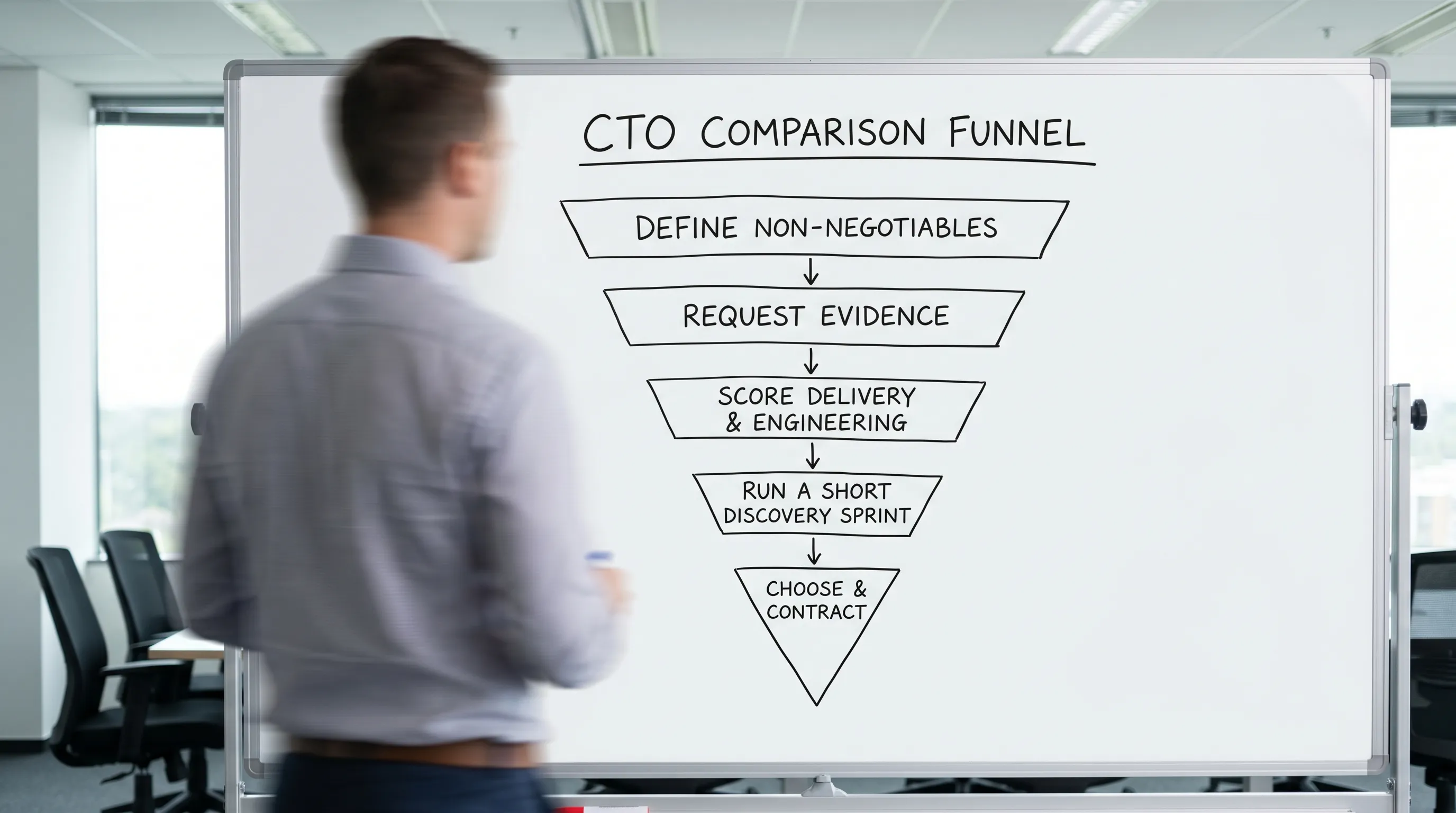

Most founders compare agencies by portfolio screenshots, hourly rates, and promises about speed. A CTO compares risk: will this team ship reliably, protect users’ data, and leave you with a codebase you can own and scale?

This guide gives you a CTO-style framework to compare mobile app development firms, with concrete artifacts to request, questions to ask, and a simple scorecard you can use across vendors.

What you are really buying from a mobile app development firm

A mobile app is not just “features on a timeline.” It is a production system that must survive:

- App Store and Google Play policy changes

- OS updates and new devices

- Real-world networks (offline, flaky, slow)

- Security and privacy expectations

- A long tail of bug fixes, performance work, and iteration

So when you evaluate mobile app development firms, you are evaluating two systems:

- Engineering system: architecture, code quality, testing, security, performance.

- Delivery system: planning, estimation, CI/CD, QA, release management, incident handling, communication.

A beautiful portfolio can hide weak delivery. A modest portfolio can hide excellent delivery. CTO-style comparison focuses on evidence.

Step 1: Define “technical success” before you compare firms

If you do not define technical success, every firm will look “qualified,” and you will default to price or aesthetics.

Create a one-page set of non-negotiables. Think in terms of measurable outcomes, not tech buzzwords.

| Category | Examples of CTO-level non-negotiables | Why it matters when comparing firms |

|---|---|---|

| Reliability | Crash-free sessions target, graceful offline behavior, safe retries | Prevents post-launch fire drills and bad reviews |

| Performance | Cold start expectations, scroll performance, app size budget | Mobile users churn fast when apps feel slow |

| Security & privacy | Data minimization, encryption, secrets handling, secure auth | Reduces breach and compliance risk |

| Release velocity | Ability to ship weekly, hotfix process, rollback/kill switch approach | Keeps product iteration fast after launch |

| Observability | Crash reporting, logs/metrics strategy, performance monitoring | Lets you debug real issues quickly |

| Ownership | Clean repo, documentation, handover plan, predictable dependencies | You must be able to maintain it later |

If your app touches regulated workflows (health, finance, legal), add stricter constraints. For example, products in litigation workflows like TrialBase AI highlight how sensitive case files and source-cited outputs raise the bar for privacy, security controls, and correctness.

Step 2: Ask for evidence, not assurances

A CTO does not accept “yes, we do that.” They ask, “show me how you do it.”

Request artifacts that reveal how a firm actually builds.

| Artifact to request | What good looks like | Red flags |

|---|---|---|

| Sample PR (or anonymized repo excerpt) | Small PRs, clear descriptions, tests included, review comments | Giant PRs, no tests, unclear changes |

| Architecture note (RFC-style) | Tradeoffs, assumptions, risks, upgrade plan | Only diagrams, no reasoning |

| Test strategy | Unit/integration/UI coverage goals, what is automated, device matrix | “We test manually at the end” |

| CI/CD overview | Automated builds, checks, signing, staged releases | Builds done on someone’s laptop |

| Release runbook | Steps, rollout strategy, monitoring, rollback plan | “We submit and hope” |

| Post-launch support approach | SLA expectations, triage, incident comms | No clear owner after launch |

If a vendor cannot share anything due to “confidentiality,” ask for anonymized samples. Serious teams usually have them.

Step 3: Compare engineering quality the way a CTO would

Architecture that matches your product (not their template)

You are looking for a firm that can explain why they chose an approach for your constraints.

Signals to listen for:

- They ask about growth, feature volatility, offline needs, and platform-specific UX.

- They discuss boundaries (UI vs domain vs data), not just “we use MVVM” or “we use Clean Architecture.”

- They plan for OS evolution (permissions, background work, notifications, privacy changes).

A strong vendor will also be honest about complexity. Over-architecting an MVP is a real risk.

Testing that is designed for speed, not perfection theater

Mobile teams that ship reliably usually combine:

- Unit tests for business logic

- Integration tests for networking/data boundaries

- A smaller set of critical UI tests

The question is not “do you write tests,” it is “do tests reduce release risk without slowing delivery to a crawl?” Ask how often tests break builds, how they handle flaky UI tests, and what they do when deadlines pressure quality.

Code review culture

You can learn a lot from how they talk about reviews:

- Do seniors actively review and mentor?

- Are reviews used to enforce standards and reduce defects?

- Do they have a consistent definition of done?

If the answer sounds like “we review when we have time,” expect inconsistent quality.

Step 4: Compare delivery operations (this is where most teams fail)

Many apps “get built.” Far fewer get shipped smoothly, then iterated without chaos.

CI/CD and release engineering

A CTO-grade firm should be comfortable explaining:

- How builds are created and signed

- How environments are managed (dev/staging/prod)

- How config changes are handled safely

- How releases are staged (internal testers, beta, phased rollout)

Ask what happens when Apple or Google rejects a build and you need a same-day fix. If the process depends on a single person, your schedule is fragile.

App Store readiness is not a last-week chore

Store submission involves privacy declarations, permission strings, reviewer notes, policy compliance, and edge-case testing. Firms that treat it as a final checkbox often burn time late.

If you want a practical view of what “ready” means, Appzay has a dedicated guide you can reference during vendor conversations: App Store submission checklist.

Step 5: Compare security and privacy posture (especially in 2026)

Mobile security is now a product requirement, not an enterprise luxury. Even consumer apps are expected to handle data responsibly.

A serious firm should be able to walk you through:

- Threat modeling (even lightweight)

- Secure storage choices (keychain/keystore usage)

- Network security (TLS, cert pinning where appropriate)

- Secrets management (no secrets in the client)

- Third-party SDK governance

If you need a baseline reference for what “good” looks like, OWASP’s mobile guidance is a useful standard to mention in conversations (for example, the OWASP Mobile Application Security Verification Standard).

Also ensure they understand platform policies and privacy expectations. Apple’s App Store Review Guidelines are worth citing when you evaluate how a firm thinks about compliance-driven UX.

Step 6: Compare backend and scaling thinking (even if you start small)

Even if your first release is an MVP, vendor choices can create future bottlenecks.

Evaluate whether the firm:

- Clarifies what must be real-time vs eventually consistent

- Plans an API contract strategy and versioning approach

- Designs for observability (logs, metrics, traces) across mobile and backend

- Anticipates data migration and schema evolution

You do not need “distributed systems” for every MVP. You do need a partner who can justify the simplest architecture that will survive your next 12 months.

Step 7: Compare the team model and seniority mix

A CTO asks, “who exactly will do the work, and who is accountable?”

Be specific:

- Who is the tech lead, and how much time are they allocated?

- How many projects is the lead juggling?

- Who owns releases and on-call responses post-launch?

- What is the communication cadence, and who joins which meetings?

A common failure mode is a strong sales process followed by a junior-heavy delivery team. Ask for the exact team composition that would be assigned to your project.

Step 8: Compare contracts like an engineering leader (not just a buyer)

You do not need to be adversarial, but you do need clarity.

Look for crisp language on:

- IP ownership and access (repos, accounts, certificates)

- Definition of done (including QA, performance checks, store readiness)

- Change control (how scope changes are priced and approved)

- Warranty period and post-launch support terms

- Documentation and handover expectations

If a firm cannot explain how they handle change requests without derailing the schedule, expect surprises.

A practical scorecard to compare mobile app development firms

Use a single scorecard across vendors to reduce bias.

| Dimension | What you score | Suggested weight |

|---|---|---|

| Product and UX partnership | Discovery, prototyping, decision quality | 15% |

| Mobile engineering quality | Architecture, testing, code review | 20% |

| Delivery operations | CI/CD, QA, release management | 20% |

| Security and privacy | Threat awareness, practices, compliance | 15% |

| Backend and integration | API design, scalability thinking | 10% |

| Communication and ownership | Accountability, cadence, transparency | 10% |

| Commercial fit | Pricing clarity, change control, support | 10% |

How to use it: score each category 1 to 5, multiply by the weight, and compare totals. More importantly, compare the “why” behind each score.

The fastest way to validate a firm: a paid discovery sprint

If you are choosing between two or three strong candidates, a small paid discovery can de-risk the decision.

A good sprint outcome is not “a bunch of meetings.” It is tangible material you can reuse regardless of who builds:

- A prioritized scope for V1

- A prototype that resolves key UX risks

- Architecture decisions with tradeoffs documented

- A delivery plan with assumptions and risks

Appzay’s roadmap article can help you structure what should come out of this phase: Mobile application development roadmap for funded startups.

Frequently Asked Questions

How many mobile app development firms should I compare? Two to four is usually enough. More than that often creates decision fatigue without improving quality.

Should I prioritize a firm’s portfolio or their delivery process? Portfolio matters for UX craft and taste, but delivery process predicts whether you will ship on time and iterate safely. CTO-style evaluation weighs both, with more emphasis on evidence of execution.

How do I know if a firm can handle App Store and Google Play risk? Ask for their release runbook, examples of past rejections, and how they prepare privacy disclosures, reviewer notes, and phased rollouts.

What is a reasonable way to compare bids that use different scopes? Normalize around outcomes: define V1 scope and non-negotiables, then ask each firm to price the same deliverables, plus a clear change-control approach for anything outside scope.

When should I hire in-house instead of using an agency? If mobile is your core differentiator and you can recruit senior talent quickly, in-house can be ideal. If you need speed to market with a high bar for quality, a premium end-to-end partner can compress time and reduce early hiring risk.

Build with a partner who thinks like a CTO

If you are a funded startup and want a team that can own the full path from concept to App Store, not just “write code,” Appzay provides end-to-end mobile app development with product strategy, UX, native engineering, scalable architecture, CI/CD, launch support, and proactive maintenance.

Explore how Appzay works and start a conversation at Appzay.